Since Tim and I built Microwulf back in 2007,

I've been contacted by a number of people who have built their own clusters

that they said were inspired by the Microwulf design.

I thought it would be fun to collect them and other related systems

all together on a single page.

You can click on any of the pictures to get a larger image.

|

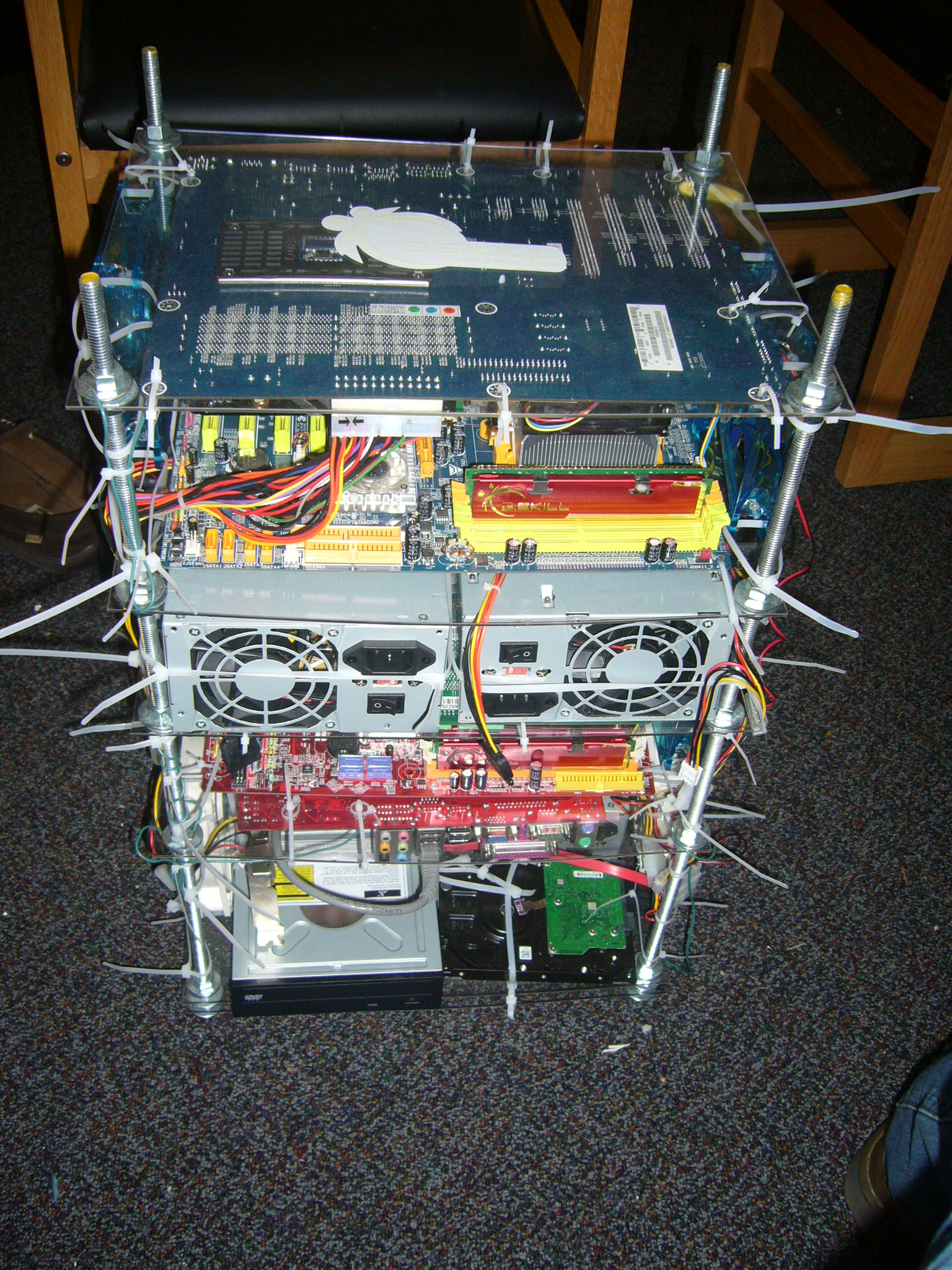

The first people to contact me with pictures of a working

Microwulf-inspired system

were Sumesh, Arif, and Alok,

three 8th semester CS students

from the IHRD College of Engineering, Attingal,

in India.

Like Microwulf, the Attingal cluster's nodes

all have dual-core CPUs,

they all boot from a single hard disk,

and the nodes communicate via Gigabit Ethernet.

They report they had access to "cheap cases" so they used them

rather than building a custom small-form-factor case.

|

|

|

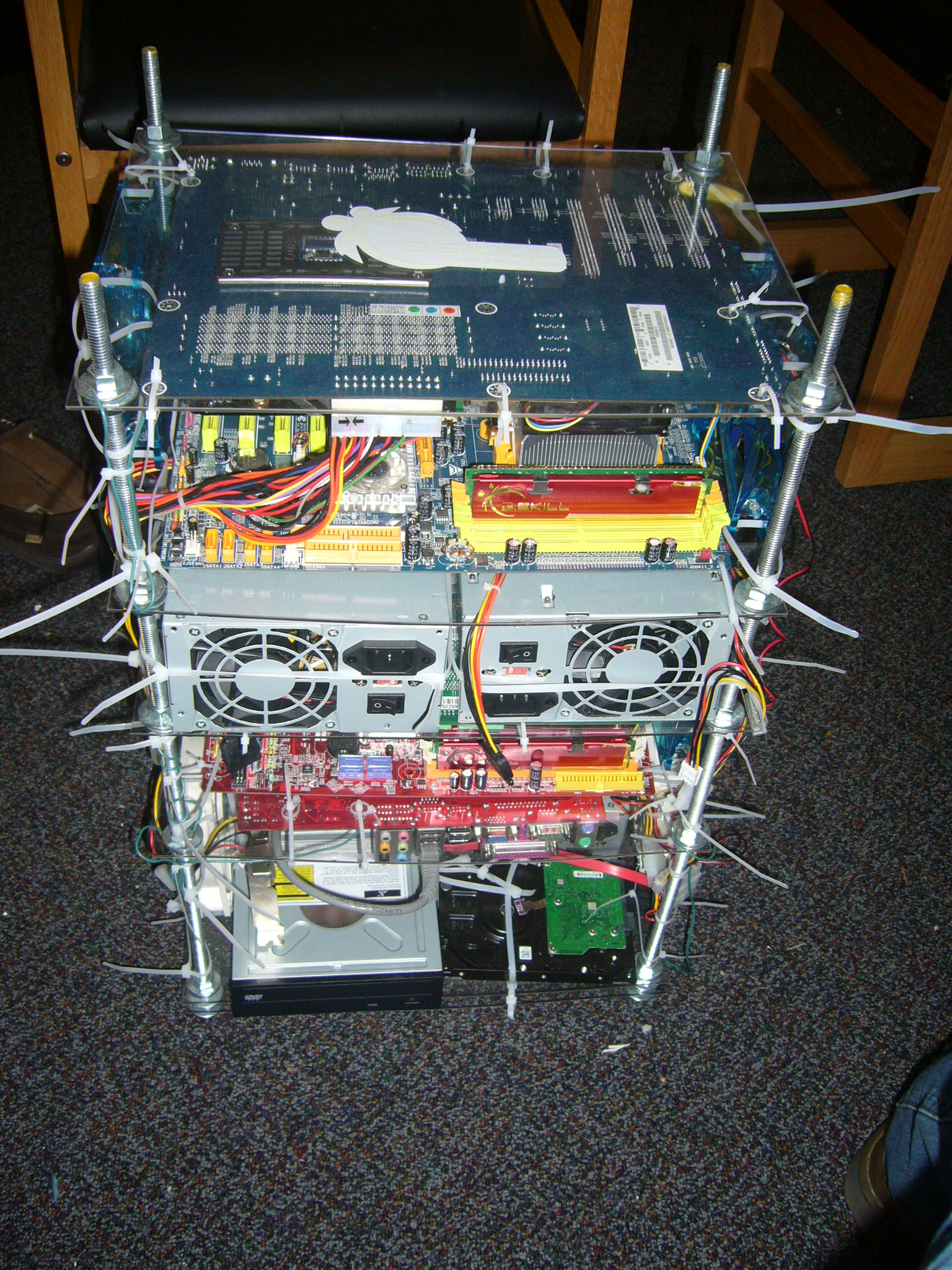

The second working "offspring" I heard about was

"CamWulf", built by a student known only to us as o21171,

who was studying EET at Cameron University

in Lawton, Oklahoma (USA).

CamWulf looks to follow the Microwulf design pretty closely.

|

|

|

The third "offspring" I heard about was from

"DeWang", a PhD student at the

University of Electronic Science and Technology of China

in Chengdu, China.

Like CochinWulf (above), this cluster follows the

Microwulf design, but it puts each node in a separate case..

|

|

|

The next related cluster I heard about was

Norbert, a cluster based on the design from the

Limulus Project

(LInux MULti-core Unified Supercomputer),

which is the brainchild of Jeff Layton and Doug Eadline.

Jeff was a co-author of our 2007

article

on the

ClusterMonkey website,

which Doug runs.

|

|

|

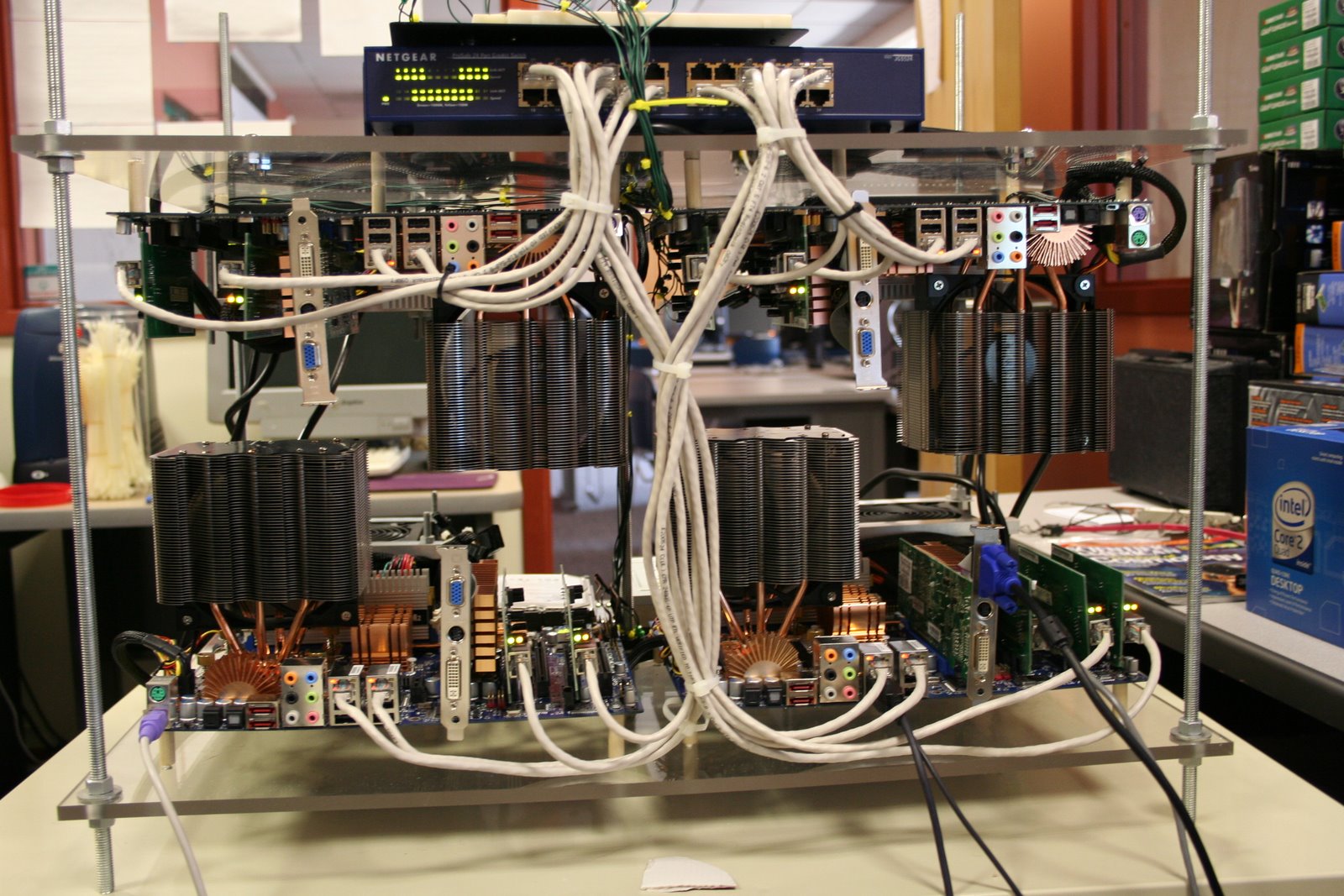

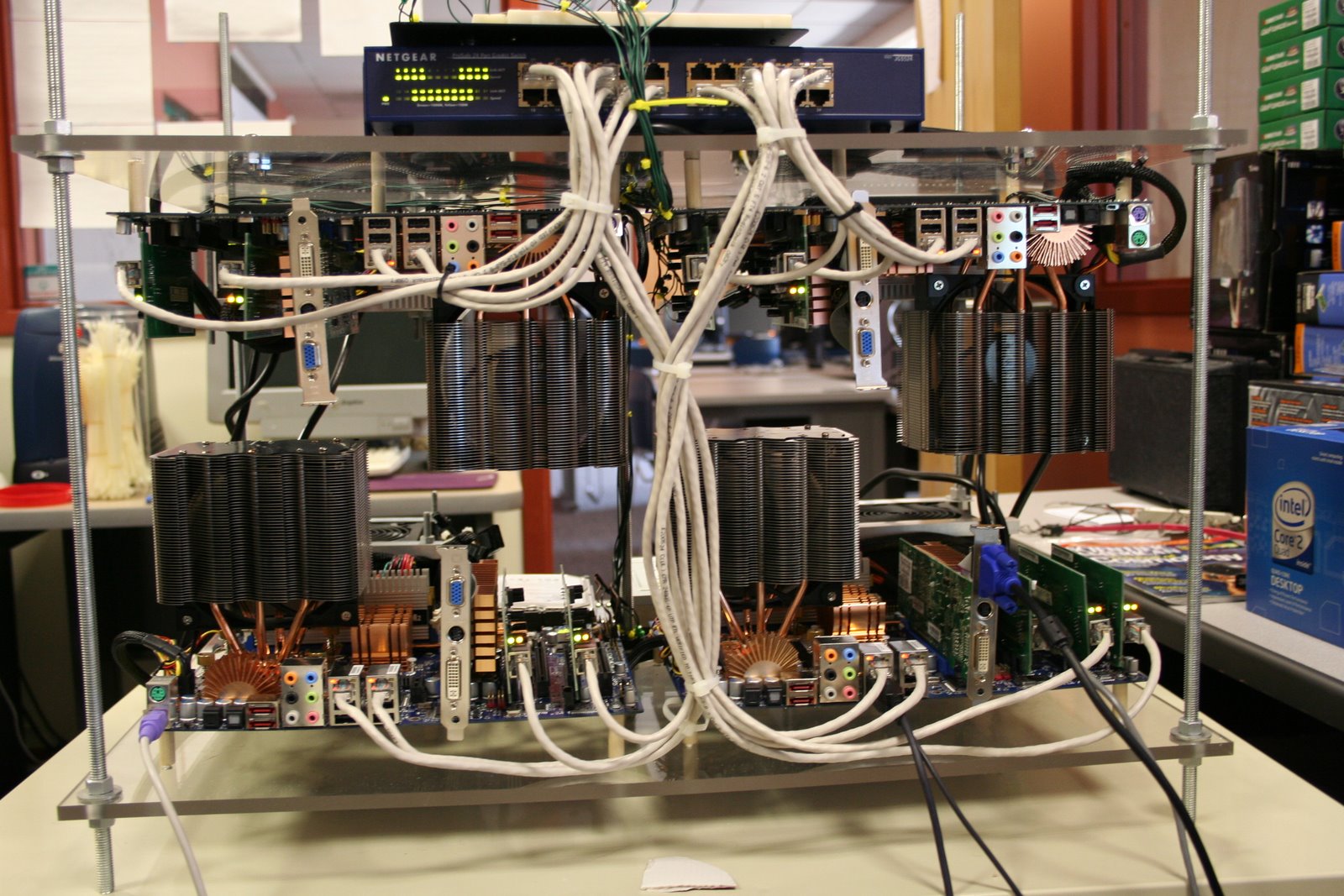

The next "offspring" I heard about was

Quadrowulf,

built by Justin Moore and Dr. Hayden S. Porter

at Furman University in Greenville, South Carolina (USA).

Quadrowulf was, to our knowledge,

the first Microwulf-style cluster

to use quad-core CPUs.

Their

website

provides excellent documentation of the software configuration process.

All of their project photos are available at

Justin's

Picasaweb site.

|

|

|

The next "offspring" system I heard about was

"BlueWulf", built by Chad Nelson,

who lives in Virginia (USA).

|

|

|

The next "offspring" I'll call "ErnstWulf",

since it was built by Dan Ernst at the

University of Wisconsin - Eu Claire,

in Eu Claire, Wisconsin (USA).

|

|

|

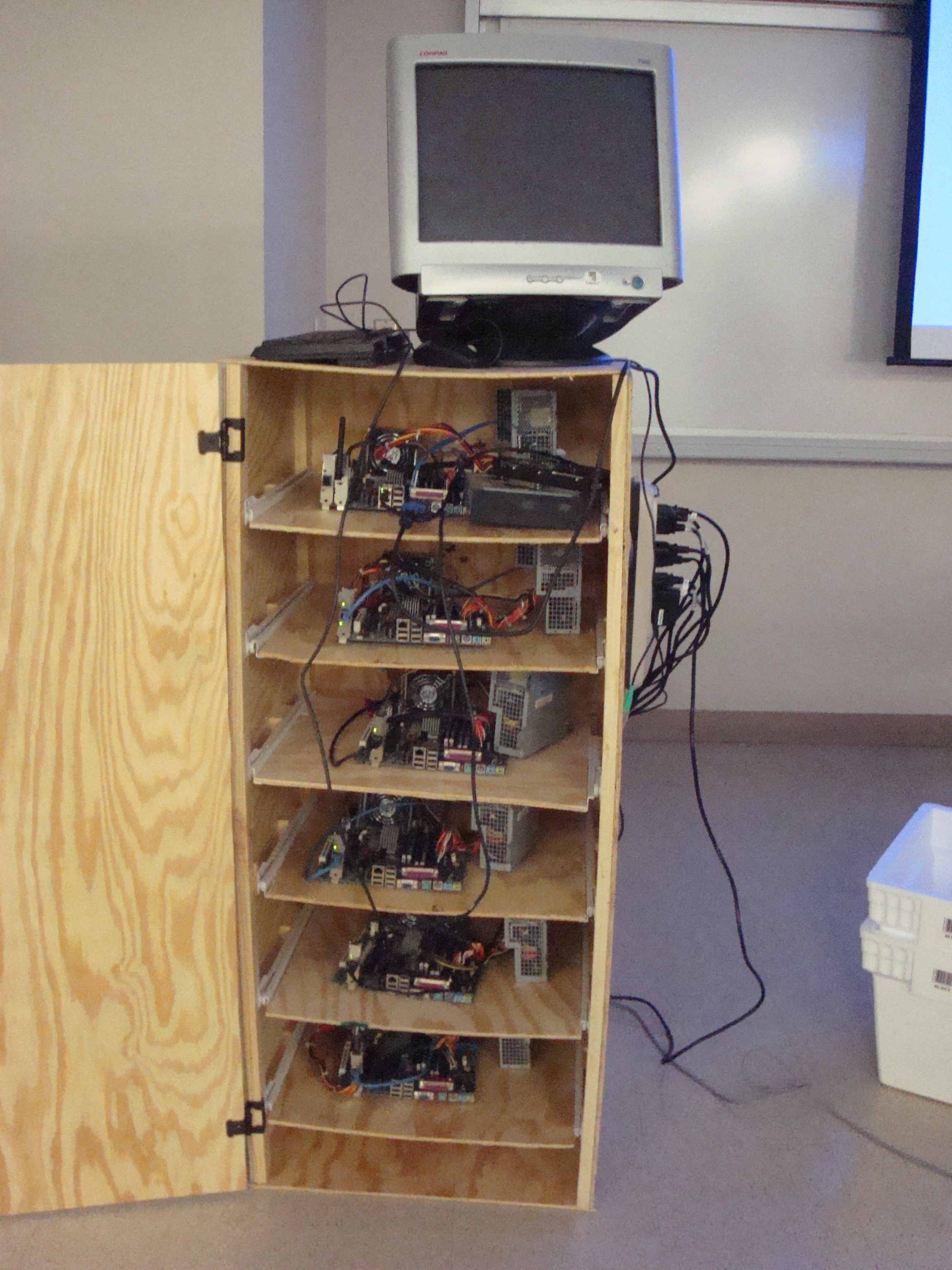

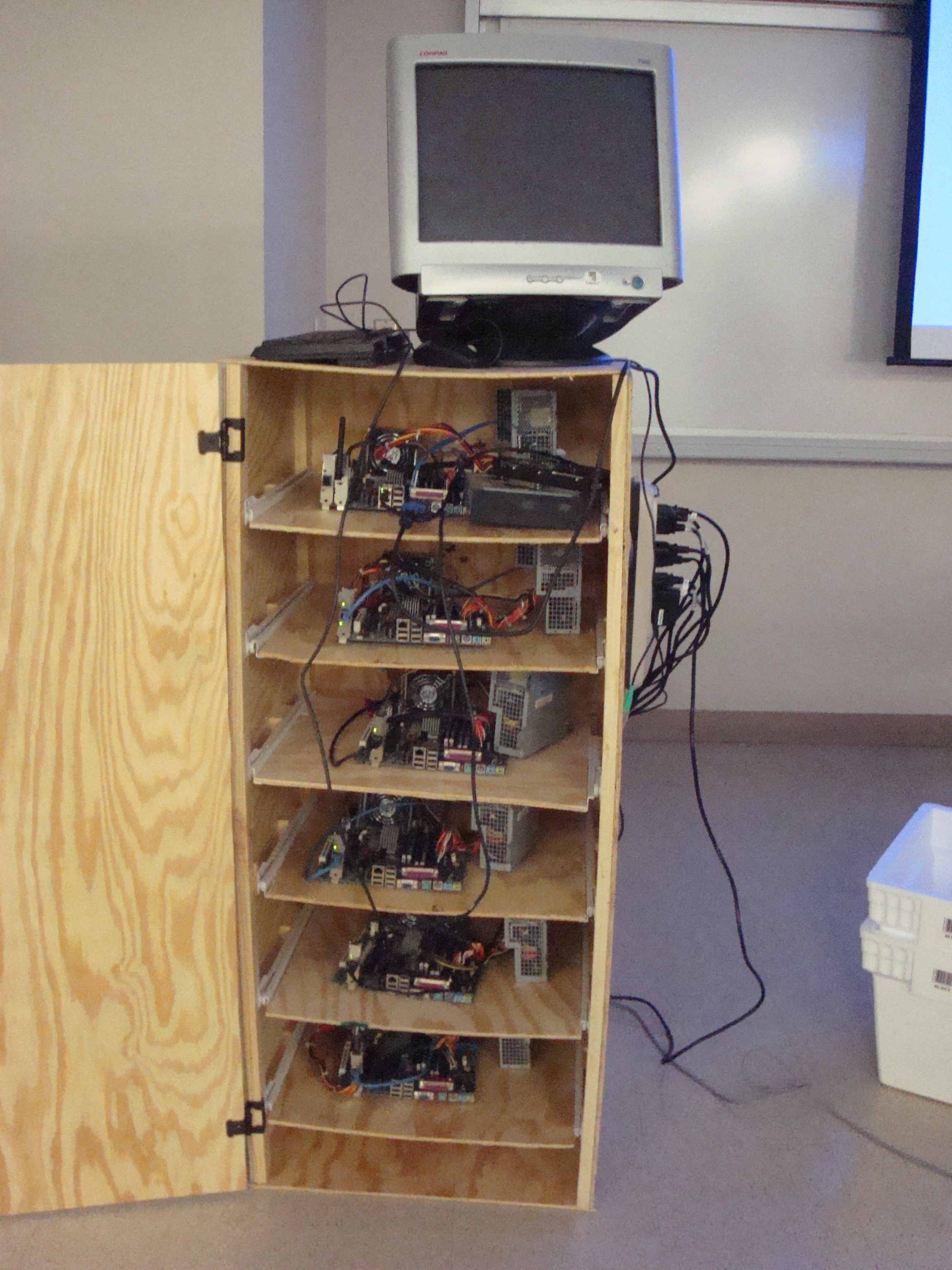

It apparently wasn't inspired by Microwulf,

but Helmer

is a rendering cluster in an IKEA cabinet,

built by Janne

at Svensk Film Effekt in Upsala, Sweden.

The Helmer website

provides a nice level of detail describing the project.

It has enough similarities that I thought

it worthwhile to include it here

for others to enjoy.

(If you've read this far, I'm assuming you're enjoying this!)

|

|

|

The next "offspring" cluster I heard about was

"David 1", built by

Michael Everhart, Brad Eidschun, Scott Munizza,

and Dr. Jose D'Arruda at

the University of North Carolina at Pembroke,

in Pembroke, North Carlina (USA).

|

|

|

The next "offspring" system I heard about was

"Grendel", built by Jason Bowen at the

University of California at San Francisco,

in San Francisco, California (USA).

He uses it in their Department of Radiology and Biomedical Imaging

for "image processing, reconstructions, data analysis, etc."

|

|

|

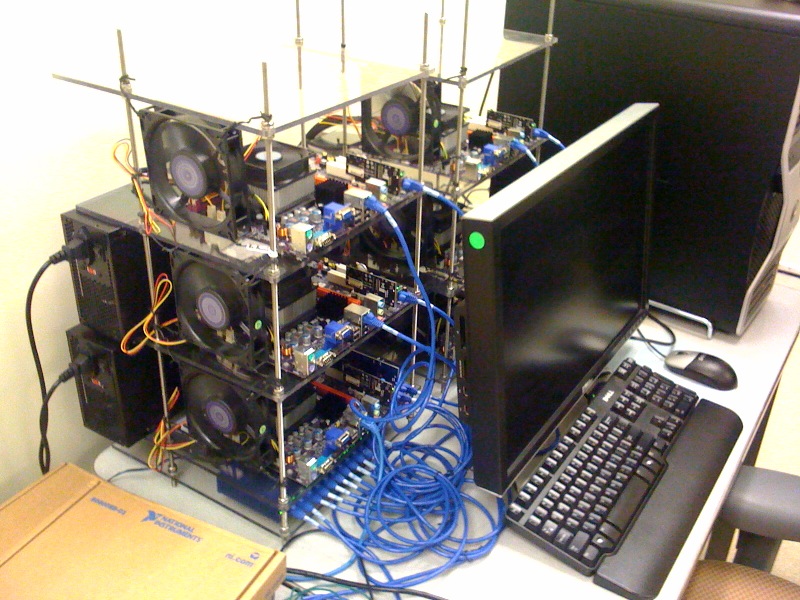

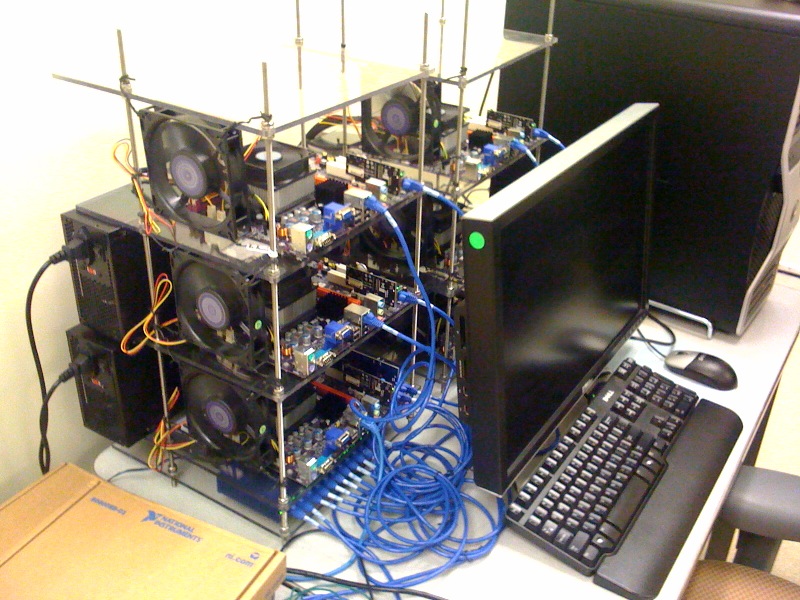

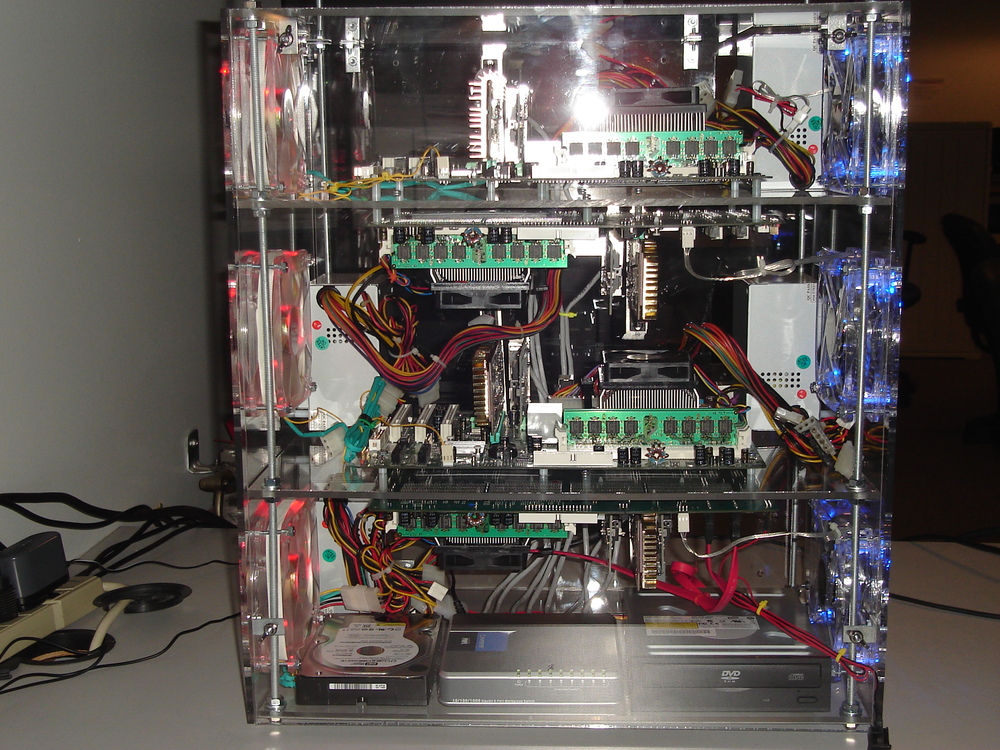

Next was

Slayer,

the Microwulf of Marquette, built by

masters students Adam Koehler and Michael Schultz under the guidance

of Dr. Craig Struble

in the Dept of Mathematics, Statistics, and Computer Science

at Marquette University,

in Milwaukee, Wisconsin (USA).

Adam sent me this note:

One design alteration of note is that we chose to have the top most

layer only have the upward facing slave node. This allows for easy

viewing and description of parts of the nodes when explaining to

students at fairs held by local high schools or junior highs. Also

contrary to many of the implementations, Slayer is fully enclosed by

plexiglass; another design choice partially based on not wanting

wandering fingers touching the cluster's parts.

The

Slayer website

documents their project nicely.

They even have color-coded their intake fans (blue) and exhaust fans (red)!

|

|

|

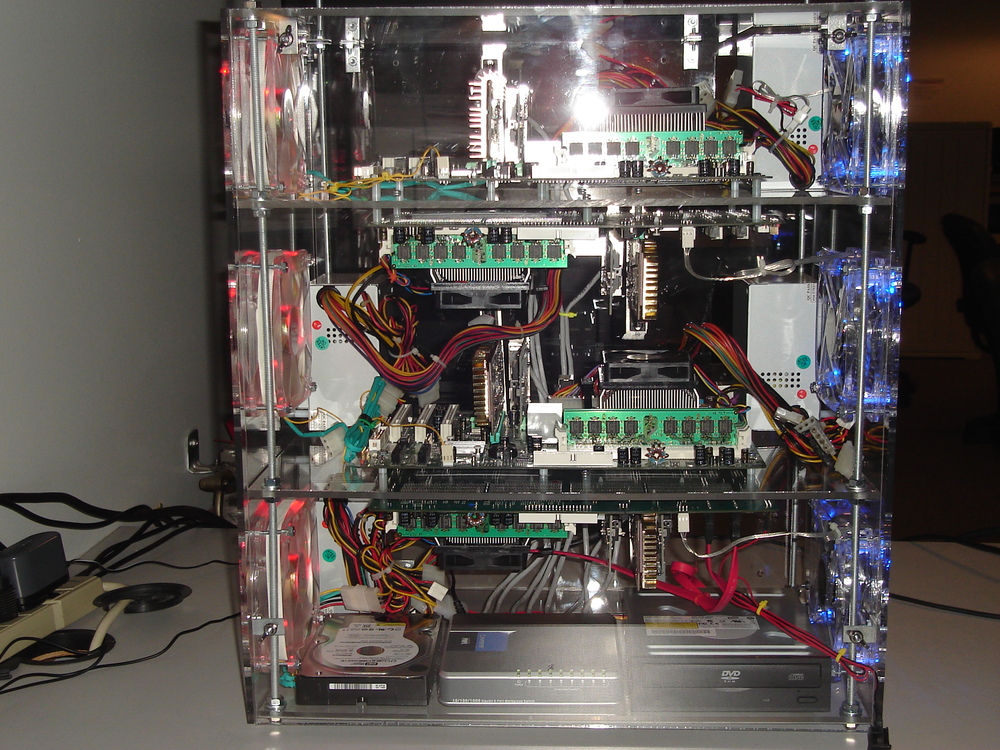

Another related cluster is this cool,

2-node, 4-CPU mini-cluster

built by Laurent Damay (lolobrin), somewhere in France, I think.

Using some of the ideas of Microwulf, the two-node design

eliminates the need for a switch, since you can just

connect the nodes using a cross-over cable.

This cluster uses a different version of Linux (Debian),

uses OpenMosix to share memory across the nodes, and

uses PVM instead of MPI.

I like it, because it shows you can build a useful mini-cluster

without sticking to the Microwulf recipe.

His site

also includes several nicely documented

parallel programming examples.

(If your French skills are like mine, you may find

Google Translate

to be useful.)

|

|

|

Another is

Pangloss,

a cluster built by Richard Faulkner, Garrett Richard, Ojasvi Dubey,

Rubab Sayeed, Evan O'Donovan, Kent Klymenko, and Cameron McInally

at Fordham University

in Bronx, New York (USA).

They were hoping to use it to calculate PI using the

Leibniz method, but they seem to have had some motherboard problems.

Since they got three of the four nodes working,

they get an 'A' for effort...

|

|

|

Another is the

tinyHPC cluster

by Mukarram Ahmad, a student

at Duke University,

in Durham, North Carolina (USA).

His system started out small,

but has grown significantly since the start.

|

|

|

Another work in progress is the

SCrappy

Cluster by Jason Ernst

at the University of Guelph,

in Ontario, Canada.

|

|

|

Another system based on Microwulf is Ukeinwulf, a 6-node cluster

constructed by the synthetic and theoretical inorganic chemist

Dr. Dimitris Manganas and the electrical engineer George Manganas.

Each node has an AMD Phenom (quad core) CPU, providing 24 cores total.

It is programmed and administrated by Dr. Manganas.

Currently Ukeinwulf equips the computational facilities of the inorganic

chemistry group of professor Dr. Panayiotis Kyritsis in the

University of Athens.

Dr. Manganas is using it for ab initio

quantum chemical calculations in synthetic biomimetic complexes.

Please click the picture for more details.

|

|

|

The Kunkunguo group

from the College of Material Science and Engineering of

Hunan University

has also built a Beowulf cluster based on Microwulf,

to help them conduct research on shapes of biological membranes.

They were also kind enough to send this

list of updates

to Tim's system configuration notes.

|

|

|

George Perdrizet built

Vostok, a cluster of laptops he configured by following our instructions.

More comments are available on

reddit.

|

|

|

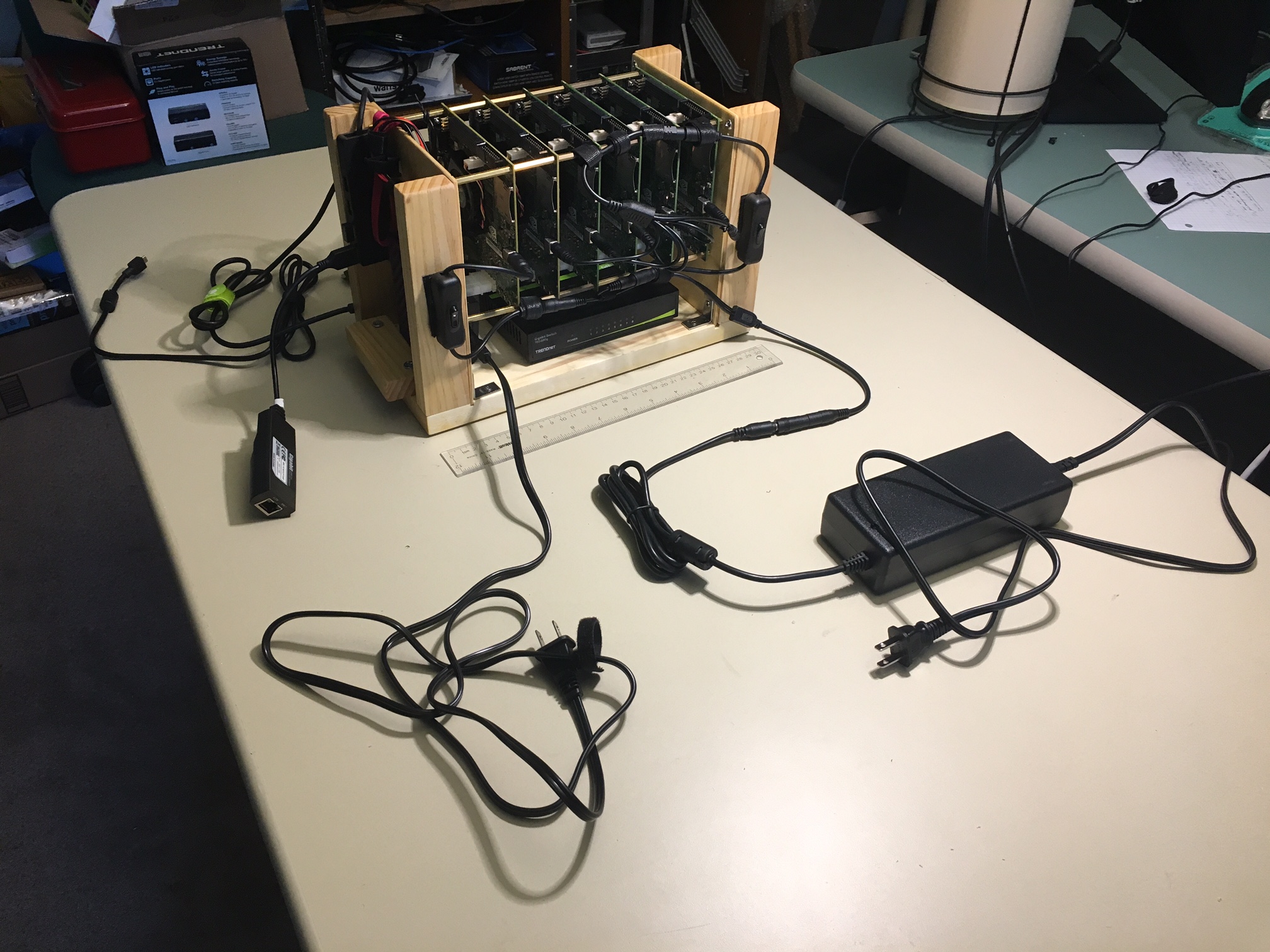

Libby Shoop and her students at Macalester College built Rosie and

Rosie2.0 (shown at right), a 6-node cluster built from

Nvidia Jetson TK-1 single board computers.

Each Jetson has a quad-core ARM processor and a 192-core CUDA-capable GPU.

Rosie2.0's nodes all share a common solid-state drive (SSD),

communicate through a Gigabit Ethernet switch,

are all powered by a single power supply,

and the system is powered on with a single on-off switch.

|

|

That's all of the related systems and pictures I know of.

Many other people have contacted me and said they were planning

to build personal clusters based on the Microwulf design;

so I have no doubts that there are other related systems out there.

If you have built a Microwulf-inspired cluster,

please send me a picture, any other relevant information,

and I'll add it to this page.

If you have a project website, include the URL and I'll include that too.

Finally,

I've also heard from people who have built small-footprint,

computationally-dense clusters

that predate Microwulf and

Little Fe.

These systems include: